I was recently at the PHIN 2007 conference facilitating a stakeholder group on collaboration and witnessed the following conversation with state and local public health partners:

Person A: We would benefit if we had a common strategy defined that we could follow.

Person B: Yes, we could define our processes so we could compare what we have in common and collaboration on systems.

A: Then we could create a plan for how to align our IT investment with business drivers (seriously, they said exactly this)

Person C: That's called enterprise architecture, you're talking about enterprise architecture.

B: No, don't say that word.

A: Yeah, what does that even mean? We looked up "enterprise" in the dictionary and it means a risk taking endeavor.

It's interesting to witness this for two reasons:

1) They've obviously encountered EA before and it left a bad taste in their mouth. This seems fairly common as a large portion of my engagements involve explaining how we aren't like those other EA guys who wasted their time and money.

2) It is an artificial term that reeks of IT speak.

As much as EA claims to be about the business and how business should drive EA, the EA organization is under the CIO/CTO in 9 out of 10 organizations (at least in the federal environment it is mandated by Clinger-Cohen). We constantly use technical jargon even when we don't think we are doing so.

Another recent anecdote I had when talking with a business steward about what the difference between a data repository and a data warehouse is:

"The data repository contains the raw data received from partners. The data is then processed with business rules applied and loaded into the data warehouse. The data warehouse has analysis and structure for the aggregate data."

Now, granted, this is to explain the arbitrary definition of data warehouse/repository that the technical group had developed. But I was trying to clear up this confusion. The business steward is a brilliant person with a PhD and everything but she said

"Sorry, I don't know what you just said."

If EA really wants to engage with the business side of the organization, it needs to stop using geeky terms like "architecture", "enterprise", "service" (in an SOAy sense), etc etc.

A final example: In the

FEA Practice Guide it says "Architect, Invest, Implement".

What other practice

verbs nouns as much as IT? I mean seriously, if you buy a house does your architect say "I'm going to go architect up your blueprint sir."

Anyway, the point of this post is that EA should strive to remove the IT vocabulary and talk like the rest of the business people that EA strives to be.

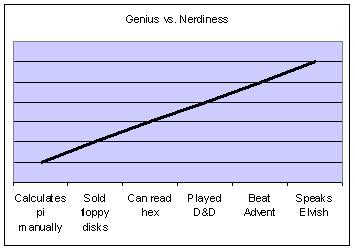

So as a youth I always equated technical prowess with nerdiness. Here's a very basic chart (showing elvish before the movies came out, so it was much, much worse). I got to grow up around pc shops when they were run by super nerds who built their own boxes out of computer shopper and let you borrow civ1.

So as a youth I always equated technical prowess with nerdiness. Here's a very basic chart (showing elvish before the movies came out, so it was much, much worse). I got to grow up around pc shops when they were run by super nerds who built their own boxes out of computer shopper and let you borrow civ1.